Executive Summary

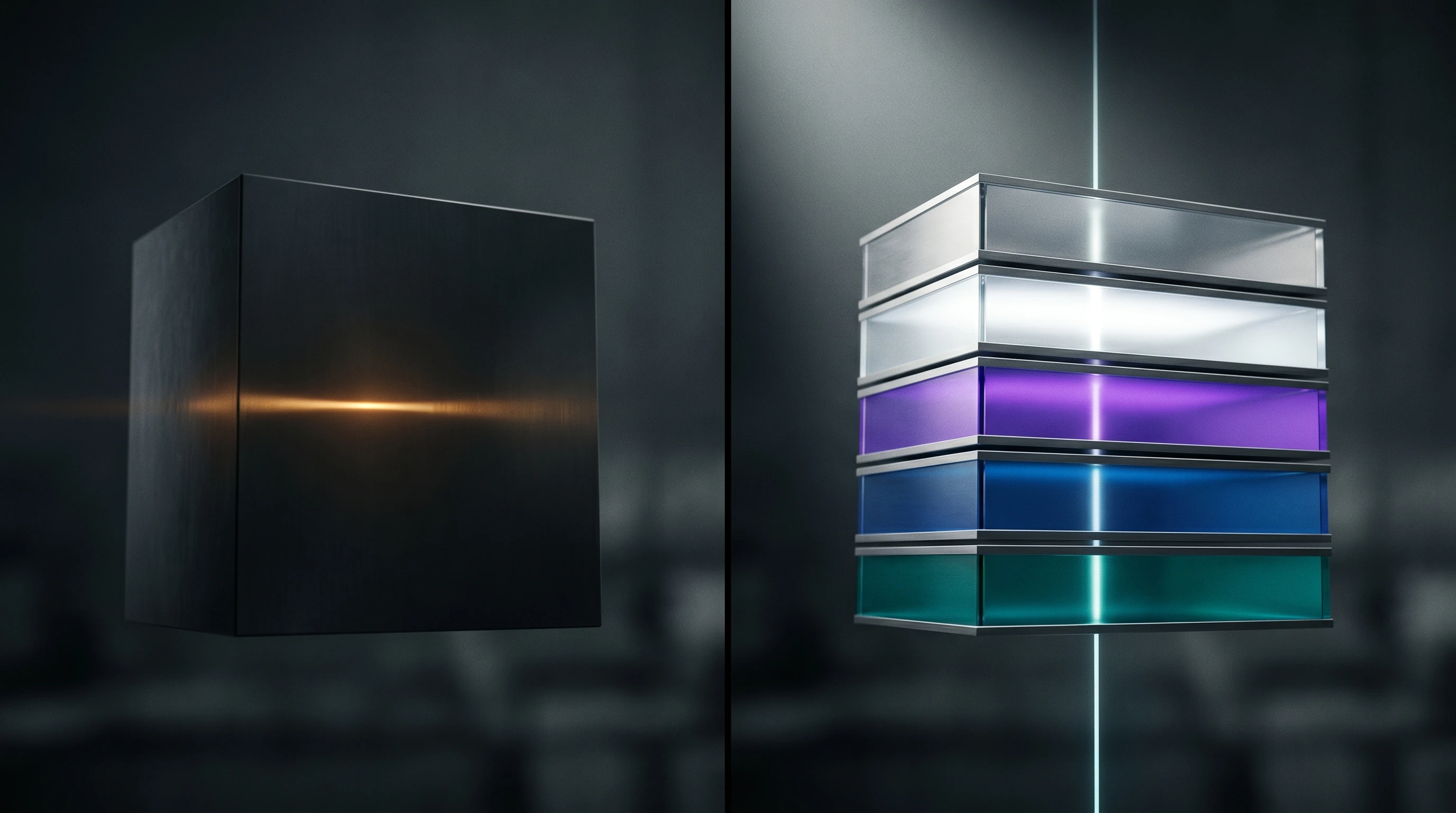

Three concurrent signals on May 14, 2026 confirm that the agent production stack is undergoing a structural stratification: five distinct product categories — memory, skills, evaluation, sandbox, and harness — have each reached the point where competing products exist, benchmarks are published, and pricing decisions are happening independently of the layers above and below.

In a single 48-hour window, GitHub Trending surfaced tools spanning all five categories simultaneously. LangChain launched seven discrete products at Interrupt 2026 SF targeting the evaluation and observability category. A formal academic paper from the Chinese University of Hong Kong (SkillRAE) proposed a mathematical framework for treating skills as first-class retrieval objects rather than passive text. Newsletter and social signals confirmed that "harness engineering" and "context engineering" are now recognized practitioner specializations with stable vocabularies.

The diagnostic test for whether a software layer has become a product category is straightforward: can you benchmark it, buy alternatives for it, and price it independently of what runs above and below? By that test, all five layers of the agent stack have crossed the threshold in the May 2026 cycle. The implication for teams building production agent systems is that the architectural question is no longer "which LLM do we use" but "which memory, which skills infrastructure, which harness, which eval pipeline" — and each of those now has a real vendor selection decision attached to it.

What's Shifting

The dominant pattern in 2024–2025 agent infrastructure was flat: connect an LLM to a set of tools, prompt it carefully, observe the results. The architecture felt like one product with optional plug-ins — the model was the product, and everything else was scaffolding.

The May 2026 signal cluster shows that scaffolding has become infrastructure. Each former plug-in category now has multiple products competing on quantified benchmarks.

The memory layer was the first to stratify. agentmemory (github.com/rohitg00/agentmemory) published head-to-head benchmark results against named competitors: 95.2% R@5 on LongMemEval-S against mem0 at 68.5% and Letta at 83.2%. The moment a layer has quantified comparisons between three competing products, it has become a product category. The architectural bet underneath agentmemory — hybrid BM25 + dense vector + knowledge graph retrieval fused via Reciprocal Rank Fusion (k=60), with Ebbinghaus decay applied to temporal relevance — is an argument about memory engineering that is entirely independent of which LLM runs on top of it. The claimed ~92% token reduction versus loading full project files is separately verifiable through instrumentation, and the conservative defaults (AGENTMEMORY_AUTO_COMPRESS=false, INJECT_CONTEXT=false) indicate production-grade posture rather than demo readiness.

The skills layer is following the same trajectory with slightly less maturity. Anthropic's official skills repository (github.com/anthropics/skills) ships a Skills specification, document-creation production skills (DOCX, PDF, PPTX, XLSX), and a plugin marketplace model against the emerging agentskills.io cross-agent standard. Hugging Face simultaneously ships skills as first-class installable infrastructure: hf-cli-skill, llm-trainer-skill, gradio-skill, dataset-skill — callable by Claude Code or Gemini CLI to fine-tune models or launch remote compute jobs on HF infrastructure (youtube.com/watch?v=OV56RddyFuU). The SkillRAE paper from CUHK formalizes the core claim: a skill is an operator that requires a compilation pass, not passive text that an LLM can reason its way through at inference time (youtube.com/watch?v=jIctzTn_8-E). The agentskills.io standard is referenced by both spec-kit (80+ community extensions) and anthropics/skills — the standardization race has started.

The evaluation and observability layer received its biggest single-day push in the LangChain Interrupt 2026 SF launch cluster. LangSmith Engine (langchain.com/blog/introducing-langsmith-engine) automates the trace→issue-cluster→fix-PR→regression-eval pipeline, collapsing thousands of individual trace failures into a small set of prioritized issues and drafting code fixes as ready-to-merge PRs. Alongside it, SmithDB — a purpose-built agent observability database using Apache DataFusion and Vortex — delivers 12× performance on agent-specific access patterns compared to general-purpose infrastructure, by co-designing the data layer around the access pattern: thousands of intermediate spans per agent run, unbounded payload sizes, nested event structures. LangChain Labs, announced simultaneously, is a new applied research division focused on continual learning, partnering with PrimeIntellect for self-improving agent deployment.

The sandbox and isolation layer saw NVIDIA make its entry. OpenShell (github.com/NVIDIA/OpenShell) applies declarative YAML policies across four distinct domains (filesystem, network, process, inference) with a Layer 7 HTTP proxy intercepting every outbound agent call, routing with credential swaps, and enforcing policy before any external service sees a request. It competes conceptually with trycua/cua (github.com/trycua/cua), which emphasizes cross-OS sandboxing (Linux/macOS/Windows/Android) with built-in trajectory recording for RL training. The fact that NVIDIA is building in this space — not as a research demo but as alpha infrastructure with Helm charts, GPU passthrough via CDI, and explicit multi-tenant enterprise roadmap — signals that agent isolation has crossed from implementation detail to infrastructure product.

The harness layer sits above all the others and is the most contested, because it claims coordination rights over the entire stack. LangChain's deepagents v0.6 introduces ContextHubBackend as the centralized store for "skills, policies, and memories that shape agent behavior," alongside per-model harness profiles. GitHub Copilot SDK (github.com/github/copilot-sdk) exposes a JSON-RPC composition architecture across six languages with BYOK (OpenAI, Azure AI Foundry, Anthropic) removing the subscription prerequisite. iii-hq/iii reduces the harness problem to three orthogonal primitives (Worker, Trigger, Function), arguing that the entire quadratic integration cost of adding services disappears when the composition surface is minimal enough for an agent to reason about the full system in a single context window.

Evidence

The strongest evidence in this cycle is not any single product but the simultaneity: all five layers trending or launching within a 48-hour window across four independent source types (GitHub Trending, YouTube, X/Twitter, email newsletters).

On the memory layer: agentmemory's LongMemEval-S results are the first published three-way benchmark comparison in the memory MCP space. The ~27-point gap between agentmemory (95.2%) and mem0 (68.5%) on a 500-question recall benchmark — even accounting for the methodological caveat that LongMemEval-S and LoCoMo are different datasets — is large enough to be decision-relevant in procurement contexts. The token-reduction claim (loading full project files at ~19.5M tokens/year versus targeted memory retrieval at ~170K) is structurally verifiable: teams with cost visibility in production agent systems can instrument this in a week. The conservative engineering defaults are a secondary signal of maturity — a memory layer that ships with compression and context injection disabled by default has been tested by real users at scale.

On the skills layer: SkillRAE's core experimental result is that LLMs running self-generated skills produce "near-no-skill performance" across three frontier models — Haiku 4.7, Opus 4.6, and Gemini 3. The gap between curated-skill performance and self-generated-skill performance is the formal argument for why the skills layer must exist as infrastructure independent of the model layer. Self-generated skills fail because the LLM cannot resolve cross-skill dependencies at inference time without a prior compilation pass that inlines sub-unit dependencies into a fully resolved context. This framing — skill retrieval as compilation, not search — is a structural claim about the architecture, not a benchmark-size sensitivity.

On the evaluation layer: LangSmith Engine's production deployments at Cogen and Campfire confirm that the evaluation category is operating in live systems, not research environments. SmithDB's 12× performance claim on agent-specific access patterns is structurally plausible: columnar storage formats (DataFusion + Vortex) with nested-document support materially outperform row-oriented OLTP databases on trace-style analytical workloads, particularly when payloads are large and unbounded. The architectural decision to build a purpose-specific database rather than adapt PostgreSQL or SQLite is itself evidence that the access patterns of agent observability have diverged enough from general-purpose infrastructure to justify a dedicated data store.

On the harness and context layers: practitioner vocabulary is stabilizing from the newsletter signals. Yanli Liu's "Harness Engineering: What Every AI Engineer Needs to Know in 2026" (2.1K Medium claps) and Vinayak Gole's "Context Engineering: The Technical Blueprint for Production-Grade AI Agents" treat harness and context as named engineering specializations with independent taxonomies. The article "Context Is The New Code" (Kushal Banda) extends this to a claim about what the productive unit of AI engineering is shifting to. When practitioner-facing content about a technical layer reaches 2K+ engagements on a single article, the layer has crossed from expert knowledge to mainstream engineering discipline.

The financial confirmation comes from Granola's March 2026 Series C: $125M at $1.5B (Index Ventures + Kleiner Perkins), 250% revenue growth in the quarter pre-raise. Granola explicitly repositioned from "AI note-taker" to "context layer underneath every AI workflow." The named enterprise clients — Vanta, Cursor, Lovable, Mistral, Asana, Gusto, Thumbtack, Decagon — are disproportionately AI-native companies that would immediately recognize if they were paying for a notes app versus infrastructure. A $1.5B valuation for a context-layer product is the financial system confirming that a distinct product category exists and commands independent pricing.

Countertrends

Not everyone is building vertically. Three distinct pushback signals are worth tracking as they represent genuine architectural alternatives rather than noise.

The strongest is the primitive-minimalist counterargument. Daniel Miessler's Personal AI Infrastructure v5.0.0 explicitly rejects layered-stack complexity — "No RAG, ever, since June 2025" — substituting filesystem + ripgrep as the index (github.com/danielmiessler/Personal_AI_Infrastructure). His "bitter-pilled engineering" principle removes over-prescription as models get stronger, treating added infrastructure as technical debt rather than leverage. With 45 skills, 171 workflows, and 37 hooks, PAI achieves comparable agent capability surface area to the layered approach without the abstraction overhead. The iii-hq/iii philosophy carries the same structural argument: "agents reason better about systems with fewer, more orthogonal primitives." Both are coherent counter-theories to the stratification trend, not failure cases.

The economic countertrend is orchestration cost. "The Orchestration Tax: Why Multi-Agent Systems Get Expensive" (Deepanshu Gupta, Towards AI) — surfaced in the email-newsletter signal cluster and measurably high-salience (featured article placement) — addresses the failure mode of stratification directly: every additional layer in the stack adds latency, context cost, and operational complexity. In practice, a memory retrieval call, a skills compilation pass, a sandbox startup, and an eval trace capture on every agent turn can easily add 200–500ms and significant per-call cost relative to a bare-prompt architecture. For high-frequency, low-stakes agent calls, the overhead-to-value ratio inverts quickly.

The third countertrend is vendor selection caution. The warning from @clairevo at LangChain Interrupt — "We are pre-convergence on tools — keep your organizational options open" — and Nate Herk's recommendation to "build flexible projects that can move between Claude Code, Codex, Hermes Agent, and OpenClaw inside an hour" (youtube.com/watch?v=-nG-9vlSkho) represent practitioner-level awareness that the harness layer is contested rather than settled. Teams that locked into a single vendor in late 2025 are "stuck in contracts and missing better tools." The implication: the stratification creates real switching-cost risk before the layers have matured enough to have stable APIs and migration paths between vendors.

Forecast

Three outcomes appear likely on a 6–12 month horizon based on the convergent signals in this cycle.

A benchmark economy will form around each layer. LongMemEval-S already serves the memory layer. Skill-Bench and Agent-Skill-OS serve the skills layer. OSWorld, ScreenSpot, and cua-bench serve the sandbox layer. The missing evaluation infrastructure is for the harness layer and the observability layer — LangSmith Engine is the first serious attempt at the latter. Expect published benchmarks for agent harness composition quality (latency, cost-per-task, cross-layer call overhead) by Q4 2026. Once a layer has a public benchmark, procurement decisions become data-driven, which accelerates both vendor selection cycles and consolidation. The memory layer is roughly 12 months ahead of the skills and sandbox layers on this curve.

Stack lock-in will shift from model to harness. The Granola data point — $1.5B valuation for the context layer, not the inference layer — indicates where the financial system expects durable value to accumulate. The harness (ContextHubBackend in deepagents, the JSON-RPC composition layer in copilot-sdk, the iii Worker/Trigger/Function primitive set) is the point where memory, skills, sandbox, and evaluation layers connect. The company that owns the composition standard without owning the commodity substrate layers (model inference, storage) captures the integration premium. LangChain's Interrupt 2026 launch cluster — seven products spanning Engine, Labs, SmithDB, Fleet free tier, and deepagents in one coordinated day — is the most explicit bid for that position yet. The risk is that coordination rights become lock-in before teams understand the cost.

The agentskills.io standard will determine whether the skills layer stratifies or consolidates. Three independent systems referenced agentskills.io in this cycle: anthropics/skills, github/spec-kit, and iii-hq/iii. A skills specification that any agent harness can read and any skills layer can execute would allow the skills market to develop across vendor lines — analogous to how npm decoupled package authorship from JavaScript runtime. If agentskills.io achieves that cross-vendor adoption, the skills layer becomes a genuinely open product category with low switching costs between skill providers. If the standard fails to propagate, vendor-specific skill formats (HF skills, Claude skills, Copilot skills, spec-kit extensions) will fragment the market and make the switching-cost problem @clairevo is warning about structurally permanent.

The July 13, 2026 date — when Anthropic's 50%-limit boost and OpenAI's two-month-free Codex offer both expire — is a natural inflection point for the harness lock-in dynamic. Teams that built on a specific harness during the promotional window will face the switching-cost analysis at the same moment. The "free-sample phase" framing that Nate Herk applies to model-level pricing applies with equal force to the harness layer: the promotional periods subsidize adoption to capture usage-pattern data and build integration dependencies, and July 13 is when teams will discover whether they made an architectural choice or incurred a dependency.