Executive Summary

Cloud AI subscriptions are under structural pressure that has become impossible to miss. Within a 48-hour window in late April 2026, six independent signal sources converged on the same conclusion: the economics of selling unlimited cloud AI inference on flat-rate subscriptions are broken. A viral billing bug in Claude Code, GitHub Copilot's degraded Pro tier, Anthropic's documented peak-hour throttling events, a financial analysis pegging OpenAI's 2026 losses at approximately $14 billion, real-world benchmarks showing open-source models running at 63–76% lower cost than closed frontier models, and a two-hour masterclass structured almost entirely around quota-stretching tactics — none of these are isolated incidents. They are symptoms of the same underlying arithmetic.

The break is not a discrete moment but an accelerating direction. AI labs are moving — cautiously at first, then faster — toward per-token billing, product-surface quota separation, and anti-abuse heuristics that frequently produce unintended billing consequences. Enterprises are responding with budget models that treat per-token costs as unbounded variance, which makes cloud AI procurement difficult to approve at CFO level. The resulting pressure is simultaneously forcing pricing structure changes at the major labs and accelerating the maturation of local and open-source alternatives beyond their historical quality ceiling.

For any organization running AI workloads at scale, the practical implication is immediate: model routing strategies — not vendor loyalty — are the correct procurement framework for the current period. The market is sorting itself faster than most vendor roadmaps acknowledge.

What's Shifting

The fundamental shift is from "unlimited access to a model for a flat monthly fee" to "a defined compute budget, billed per token, with hard ceilings per product surface." This is not a new concept in cloud infrastructure — API-based billing by usage has been the norm in cloud compute since the mid-2000s. What is new is the pace at which AI labs are moving in this direction despite having sold an entire user generation on the opposite premise: unlimited, frictionless AI access for a predictable monthly price.

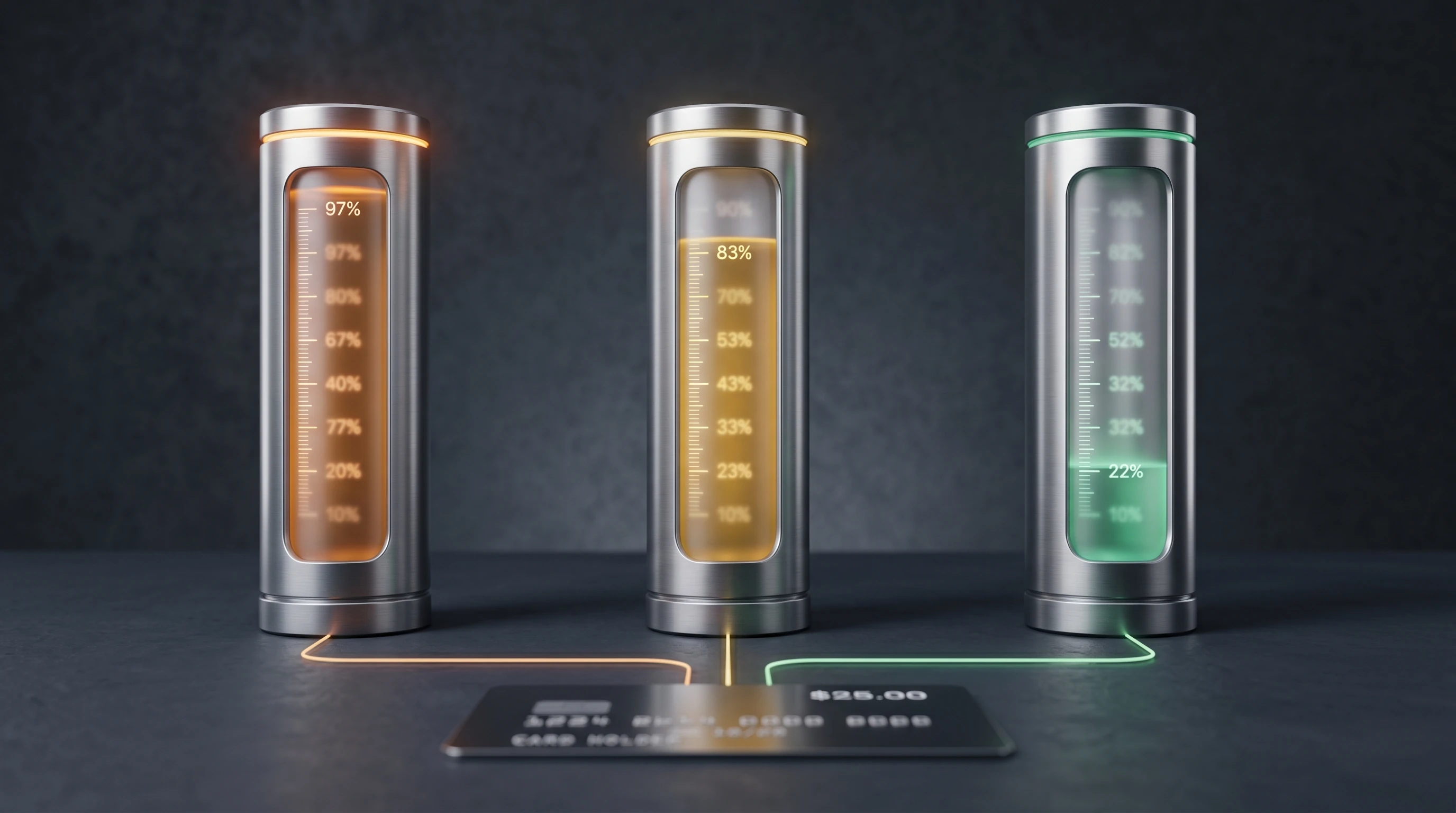

The timeline of deterioration is specific and compressing. GitHub Copilot Pro removed access to Anthropic's Opus model class, slashed usage limits, and stopped accepting new signups — all within the same quarter in 2026. Anthropic reduced Claude Pro Code limits during US peak hours around April 22–26, 2026, a change its head of growth initially characterized as "an experiment" before rolling back under user pressure. Claude Design, launched April 17, 2026, carries a separate weekly compute quota entirely distinct from Claude or Claude Code budgets — meaning a user paying $200/month for the Max-20x plan now navigates three separate resource pools under the same account. Nate Herk's public masterclass on Claude Design found that building a complete brand identity (pitch deck, landing page, mobile app prototype, and animated launch video) consumed approximately 95% of the weekly Claude Design quota, leaving the demonstration video structured almost entirely around techniques for rationing compute rather than using the product freely (Nate Herk, YouTube).

The structural argument behind these decisions is not difficult to locate. Mandar Karhade's widely-read analysis on GoPubby estimates OpenAI burned approximately $9 billion against $13 billion in revenue in 2025, with inference costs to Microsoft alone reaching $12 billion by November 2025. Projected losses for 2026 sit around $14 billion. Anthropic's gross margin, the same analysis reports, has compressed from a 50% target to approximately 40%. The central thesis: "cheap inference" is not a product decision. It is a VC and hyperscaler cross-subsidy — simultaneously funded by the same companies supplying, building, and buying from these labs — and it is one major liquidity event away from disorderly repricing (GoPubby / AI Advances).

Evidence

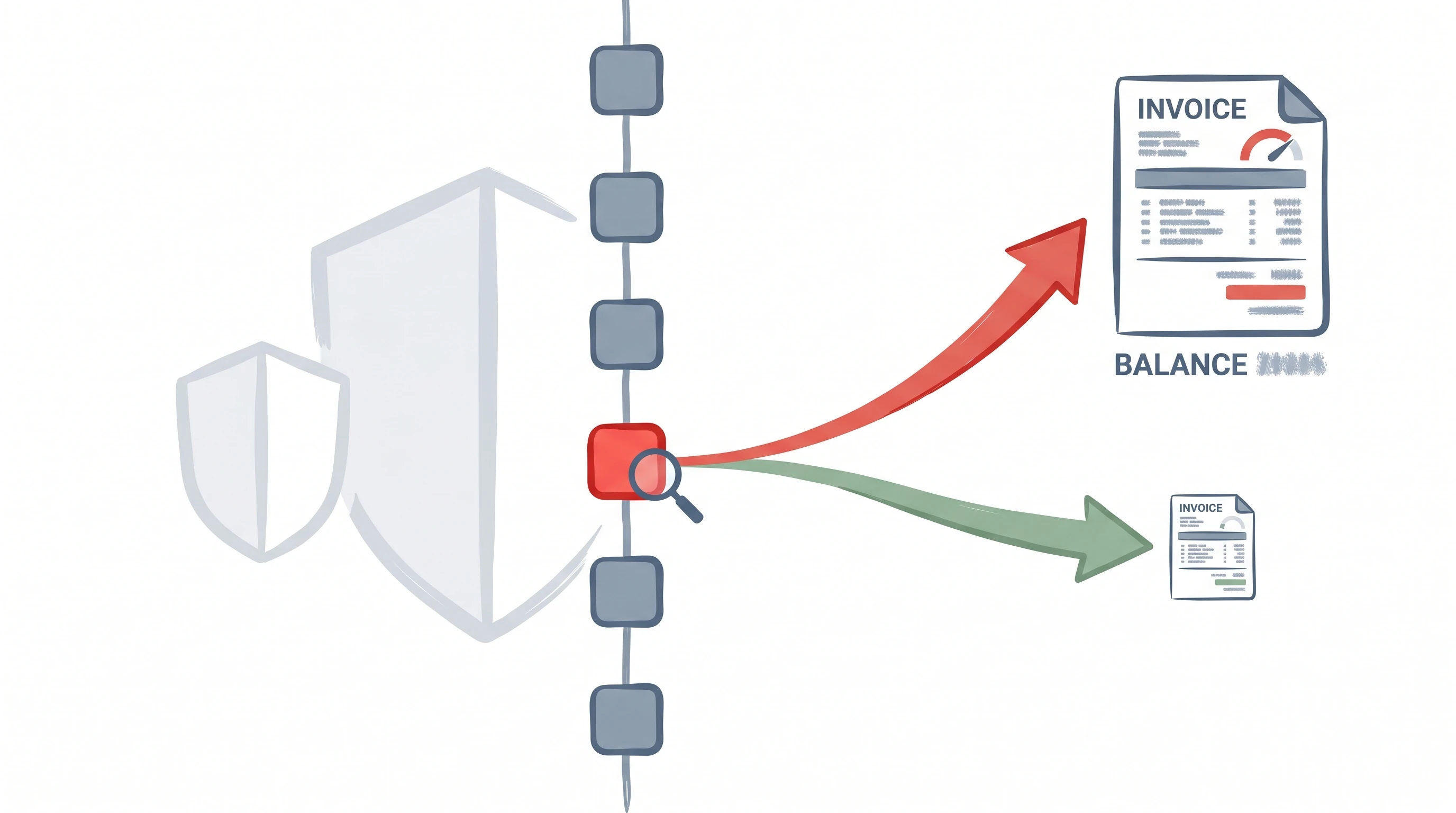

The sharpest data point in this cluster is the Claude Code billing bug documented in a GitHub issue and amplified by Tim Carambat on April 29, 2026. Claude Code's anti-abuse system used a regular expression match over a user's git repository history to detect the presence of competing coding harnesses — specifically, the filename string Hermes.MD (uppercase, .MD extension). Users on Anthropic's most expensive Max-20x plan who had this string anywhere in their project's git history were silently rerouted into "extra usage" billing tiers, regardless of their actual token consumption. Users receiving these charges were well below their plan's defined usage ceiling.

The technical particulars are revealing. The trigger was case-sensitive and extension-specific: lowercase hermes.md did not activate it, nor did .txt variants, nor the word hermes without the uppercase extension. Only the capitalized Hermes.MD pattern — the exact filename format used by Hermes Agent, an open-source alternative harness from Nous Research — routed users into overage billing. This is not a sophisticated runtime inference monitor. It is a string match at billing time, applied against historical records the user may not have knowingly retained in their git history (Tim Carambat, YouTube).

Boris Cherny, the original author of Claude Code, acknowledged the issue on the GitHub bug report as "an overactive anti-abuse system" — a phrase that functions as a technical confirmation of the mechanism. What followed exposed a second, distinct governance problem: Anthropic's head of growth publicly promised refunds and a month of free credits via a personal Twitter account, while comments on the same GitHub issue simultaneously declined reimbursement. Carambat's characterization was pointed: communicating major product-policy decisions through individual employees' personal social media accounts signals organizational immaturity at exactly the moment users require institutional clarity. The contradiction between the public promise and the support-queue response was never formally reconciled.

The mechanism reveals the underlying economics. Anthropic cannot reliably distinguish, in real time, between a user running Claude Code with high inference volume and a user running a competing harness against a different model with the API key idle. The regex-over-git-history workaround is the kind of heuristic a team builds when the actual problem — cost monitoring close to a pricing floor — cannot be solved cleanly. Carambat's reading is explicit: the $200/month Max-20x price does not cover the actual compute cost at current usage patterns, and Anthropic is improvising responses to the resulting deficit (Tim Carambat, YouTube).

The same dynamic appears in independent availability data. Nate B Jones's benchmarking work found Anthropic's 90-day reliability pages showing uptime at approximately "one nine" — around 98% — on multiple services, below the three-nine standard OpenAI was closer to maintaining. Jones noted Anthropic had signed capacity deals for more than 10 gigawatts of compute in the preceding 30 days precisely because Claude demand was outstripping supply. Peak-hour throttling is not a discrete product decision; it is a symptom of infrastructure capacity trailing adoption at a pace the current pricing structure cannot fund (Nate B Jones, YouTube).

Real cost benchmarks from LangChain's Fleet Agent testing provided the market context. Running equivalent agentic tasks across multiple models, LangChain documented: Claude Sonnet 4.6 at $0.19 per run; GLM 5.1 via FireworksAI at $0.07 (63% cheaper); Kimi K2.6 at $0.05 (74% cheaper); and, notably, Anthropic's own Opus 4.7 at $0.045 per run when accessed via the API rather than a subscription tier — 76% cheaper than Sonnet 4.6 on a per-run basis. LangChain CEO Harrison Chase named closed-model cost as "the big theme of 2026" and listed OSS model optimization as an explicit strategic roadmap priority (X / @hwchase17). The cost spread is not marginal. At scale, it is the difference between an AI workload that fits a department budget and one that doesn't.

Countertrends

The local and open-source AI ecosystem has matured faster than most closed-lab analysts have publicly acknowledged. Carambat's direct comparison is instructive: when he first committed code to AnythingLLM, the available local model was Llama 2 with a 4,000-token context window — a model he describes without irony as "a joke." Today, Qwen 3.5 0.8B with a 128,000-token context window runs on consumer-grade hardware and produces results competitive with early mid-tier commercial models. Research in 1-bit weight quantization (BitNet) and TurboQuant is pushing inference efficiency further along the same trajectory.

Open-source model cost convergence is accelerating at the model layer simultaneously. DeepSeek v4's release in late April 2026 — flagged by analyst swyx as shipping "the best open base models" using novel long-context efficiency techniques including Compressed Sparse Attention, hybrid cross-attention, and mixed key-value precision — arrived without the benchmarking emphasis typical of model releases, suggesting the team shipped against a quality bar rather than a performance leaderboard. Kimi K2.6, released under a modified MIT license, demonstrated competitive agentic performance at $0.05 per run in LangChain's testing. The gap between the best open-weight models and closed frontier models is narrowing faster than the benchmark headlines suggest.

Not every signal points toward local AI dominance. The enterprise response to cloud cost volatility is bifurcated rather than uniform. Technical teams — particularly those already running agentic workflows — are actively building model-routing strategies, keeping Claude Code or GPT-5.5 for high-value, high-complexity tasks while routing routine agentic work to local or open-source models where the per-token cost is effectively zero. Non-technical enterprise buyers remain largely locked into per-seat purchasing cycles managed by IT procurement. Their migration to per-token billing is involuntary and often arrives as an unbudgeted invoice, creating CFO-level resistance to AI tool approval that the labs have not yet addressed with coherent pricing communication.

The harness market itself has diversified beyond what most commentary acknowledges. Multiple coding harnesses — pi.dev, Hermes Agent from Nous Research, and Codex — are now legitimate Claude Code alternatives for specific task shapes. The choice of Codex with GPT-5.5 over Claude Code by the Onchain AI Garage builder for a visual-output frontend project on April 29, 2026 is a small but meaningful data point: the builder explicitly named Claude Code as his usual preference and found the quality gap, after switching, narrower than expected. Platform loyalty is weakening because the platforms themselves are no longer holding their value propositions stable.

Forecast

The evidence across these six sources, spanning a 48-hour window of the AI intelligence cycle, points toward three near-term structural developments.

First, explicit per-token pricing tiers will become the norm for coding and agentic AI tools within the next 12 months. The flat-rate $20, $100, and $200-per-month tiers will survive as market-entry price points, but the ceiling — the point at which users are throttled, routed to overage billing, or offered a quota top-up — will be set lower and disclosed more visibly. Tim Carambat's April 2026 prediction of a $500/month Max tier appearing within one to two months is directionally correct regardless of the exact price point. The structural question is not whether pricing changes but how transparently the change is communicated, and whether the labs can manage the transition without the trust damage the Hermes.MD incident caused.

Second, the governance gap identified in the Claude Code billing incident will force structural communication changes at Anthropic and likely at other labs operating under similar cost pressure. The billing bug created legal and reputational exposure the company initially chose to resolve through informal Twitter promises that directly contradicted its own support queue. One serious class-action or regulatory complaint from an affected enterprise customer will change this behavior faster than any internal cultural initiative. The pattern of major product-policy communication via personal employee social media accounts is not sustainable at the company's current scale and valuation trajectory.

Third, the competitive pressure from local and open-source models will intensify rather than stabilize. The two-year trajectory from Llama 2 (4K context, consumer hardware unusable) to Qwen 3.5 0.8B (128K context, consumer hardware capable) is a preview of the next two-year arc for both parameter efficiency and output quality. Labs that do not solve the compute economics problem before that arc closes the quality gap will face a structural customer acquisition problem: users who switched to cheaper local alternatives for economic reasons and found the quality adequate are not likely to return simply because the closed model improved further.

For enterprise buyers navigating this environment today, the operational conclusion is the same regardless of which lab's announcements prompted the review: design for routing, not loyalty. The LangChain Fleet Agent benchmark data, Carambat's usage economics analysis, and Herk's quota playbook all converge on the same posture — use the frontier closed model where it demonstrably outperforms alternatives on the specific task; default to the cheapest adequate option everywhere else. That routing discipline is not a concession to cloud AI's limitations. It is the correct response to a pricing environment that is still in the process of finding its floor.